Automated Front Tests with GitHub Actions & Playwright

Index

Overview

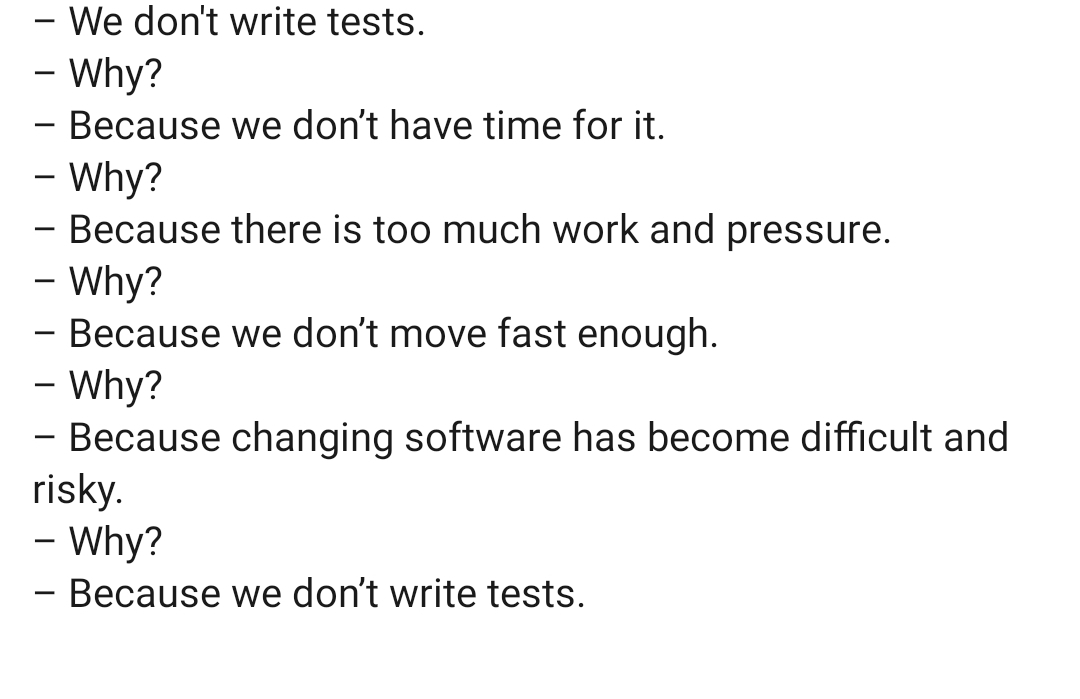

Let's be honest: testing in enterprise web applications is often minimal and ad-hoc.

The pervasive lack of automated tests is due to a perception that the effort in setting them up is not worth the return. The people who would be implementing the automated tests are therefore unable to make a compelling case for the client to invest in tests, so they don't. Let's reframe this belief by demonstrating that this is not true.

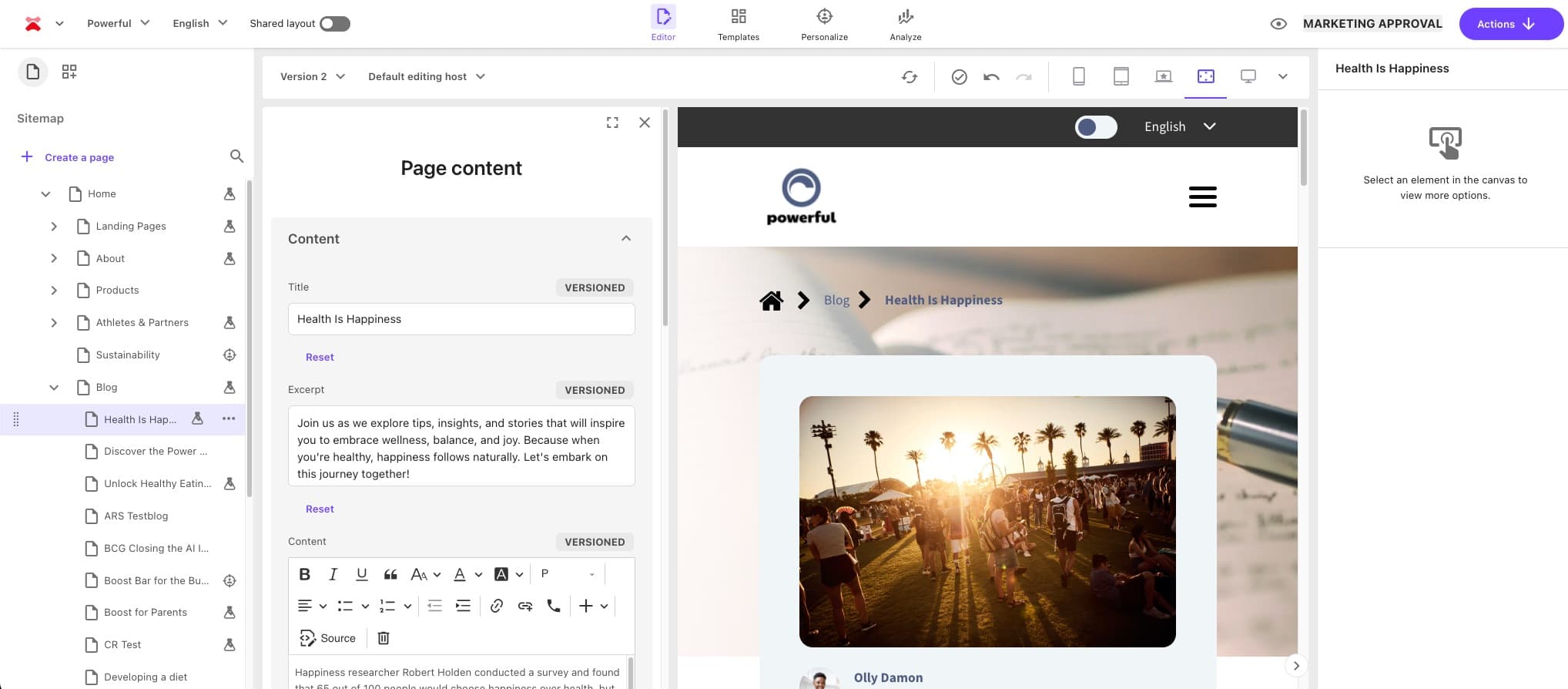

The Stack

The post was written with the following stack in mind, so some details may vary in your case:

- Sitecore XM running on Azure PaaS

- GitHub Actions (task runner agent)

- Next.js (head application)

- Vercel (hosting)

- Playwright (for testing)

Proposed Approach

The 200 IQ take is that only a tiny amount of coverage can produce 99% of the results. A few simple but crucial tests, quickly implemented, is infinitely better than no tests at all. On a long enough timeline, something on the site is going to fail or regress, whether it be via human error or force majeure. My goal is to spare you the embarrassment of only finding out about the issue from an angry customer (which certainly has never happened to me and I'm offended that you would even conceive of the possibility).

Types of Unexpected Issues

Let's think through all the different types of unexpected issues that a website might experience on a long enough timeline:

- Net new content related issues which were not previously known or accounted for (often isolated but problematic); for example: a content author deselects all the items in a Multilist field and publishes the page but does not check it -- the component that references it begins throwing an error because the developer forgot to account for that scenario

- Regressions introduced by devs

- Third party dependency failures / outages

- Infrastructure issues (CDN cache problems, load balancer issues, database timeouts)

- Browser-specific front end issues

- Performance degradation (slow page / API loads, timeouts)

- Security issues (SSL cert expiration, CORS policy changes, CSP violations, authentication issues)

- Data / content issues such (publishing failures, asset 404s, formatting changes)

The Cost of Unexpected Issues

The cost of an unexpected issue depends on the nature of the issue, but ultimately the goal is the same: we are trying to prevent churn.

Let's assume that the scenario below arose because of a regression that was recently deployed by a developer:

- Client discovers issue

- Client emails you about it

- You drop everything you are doing to reply and investigate the issue

- You determine the root cause of the issue and notify the client

- You create a fix

- You test the fix

- You deploy the fix

- Etc.

How long the resolution process takes will range vastly, from 10 minutes all the way to days or even weeks, with effort and disruptions peppered consistently throughout (chronic churn).

Let's keep it simple and conservative and say that the cost is between 1 and 8 man hours.

Also, keep in mind the hidden cost of these issues: rack up enough of them, and your client may begin to distrust you, and you may develop a reputation of being a low quality partner.

The Cost of Setting Up Automated Tests

Since I've already done most of the hard work for you, you could probably repurpose and deploy the automated tests in less than a day, assuming you've got a modern and fast continuous deployment pipeline. This shouldn't take long. If it does, you're doing something wrong.

The Most Basic Tests

We're going to start with laughably basic tests:

- Is my global search returning results?

- Does my carousel component on

xpage have a minimum of 3 items in it? - Does my

xcomponent contain something that I'm always expecting to be there? - Do pages of type

xalways have componentythat all of them should have? - Does my GraphQL endpoint return key information that I'm expecting?

The Approach

Hit the PROD site in a few key areas. Deliver the value right away. Then (optional), deploy down the stack where you have to contend with more access restrictions.

Considerations

Below are all of the interesting considerations I encountered while working on this:

- When the tests should run (cron, on-demand, pull request creation, successful Vercel builds)

- Preventing test driven web requests from showing up in server-side analytics (can't, but can request headers to be able to identify them)

- Failure notifications (who is notified in each failure scenario)

- Whether the test run on preview builds or production builds or both

- If and how tests should run after content publishing operations

- Branch requirements (should updates to a branch be contingent on the tests passing?)

- Blocking requests to analytics service such as Google Analytics, LinkedIn, etc.

- Reducing bandwidth consumption (no need to load images with simple text based tests)

- Preventing WAF access issues (ensuring that the agent doesn't get blocked)

- GitHub billing; each account has a limit to the amount of run minutes included in their plan. Enterprise plans come with 50,000 per month after which they are billed by usage -- that is more than plenty for basic tests. It's a tragedy if you are not using a good chunk of those minutes every month. Also keep in mind storage (often in the form of cache) limits.

- GraphQL endpoint URLs and API keys

Many of the above are already accounted for / mitigated in the example code, particularly around blocking unnecessary requests, minimizing bandwidth consumption, and minimizing Actions minutes consumption.

Example Code

Run npm install playwright, then add the following files to get the tests running.

playwright.config.ts

import { defineConfig, PlaywrightTestConfig } from '@playwright/test';import * as dotenv from 'dotenv';

dotenv.config();

const isCI: boolean = !!process.env.CI;

const config: PlaywrightTestConfig = { testDir: './tests',

// Concurrency and retries workers: isCI ? 4 : undefined, // 4 on CI, default value on local fullyParallel: true, retries: isCI ? 2 : 0, // Retry on CI only

// Timeouts timeout: 60_000, // 60 seconds per test globalTimeout: 10 * 60_000, // 10 mins for entire run expect: { timeout: 10_000 }, // 10 seconds for expect() assertions

// Fail build on CI if test.only is in the source code forbidOnly: !!process.env.CI,

globalSetup: require.resolve('./global-playwright-setup'),

// Shared settings for all tests use: { headless: true, baseURL: process.env.TEST_URL || 'https://www.YOUR_LIVE_SITE.com',

// Helps us identify and allow the worker requests extraHTTPHeaders: { 'x-automation-test': 'playwright' },

navigationTimeout: 30_000, // 30 seconds for page.goto / click nav actionTimeout: 30_000, // 30 seconds for each action

trace: 'on-first-retry', // Collect trace when retrying the failed test }, projects: [ { name: 'chromium', use: { browserName: 'chromium' }, } ]};

export default defineConfig(config);global-playwright-setup.ts

import { chromium, LaunchOptions, BrowserContext } from '@playwright/test';import { blockExternalHosts } from './src/util/block-external-hosts.js';

// Additional configurations for the playwright testsinterface Project { use: { launchOptions?: LaunchOptions & { contextCreator?: (...args: any[]) => Promise<BrowserContext>; }; };}

interface Config { projects: Project[];}

export default async function globalSetup(config: Config): Promise<void> { for (const project of config.projects) { project.use ??= {}; const original = project.use.launchOptions?.contextCreator;

project.use.launchOptions = { ...project.use.launchOptions, contextCreator: async (...args: any[]): Promise<BrowserContext> => { const context = original ? await original(...args) : await chromium.launchPersistentContext('', {}); await blockExternalHosts(context);

// Abort all image / font requests -- we don't currently use them in our tests and we don't want to consume excessive bandwidth await context.route('**/*', route => { const req = route.request(); if (['image', 'font'].includes(req.resourceType())) return route.abort(); route.continue(); });

return context; } }; }}src/util/block-external-hosts.ts

// Util for test suite to abort all network requests except those which are needed for fetching HTML// This prevents the loading of analytics, ads, pixels, cookie consent, etc.import { BrowserContext } from '@playwright/test';

export async function blockExternalHosts(context: BrowserContext, extraAllowedHosts: string[] = []): Promise<void> { // The domains that we expect Playwright to hit // Note that subdomains are automatically allowed const ALLOWED_HOSTS: string[] = [ 'www.YOUR_LIVE_SITE.com', 'YOUR_LIVE_SITE.com', 'vercel.com', 'vercel.app', 'localhost', '127.0.0.1', ...extraAllowedHosts ];

function hostIsAllowed(hostname: string): boolean { hostname = hostname.toLowerCase();

return ALLOWED_HOSTS.some(allowed => { allowed = allowed.toLowerCase(); return ( hostname === allowed || hostname.endsWith('.' + allowed) // subdomains ); }); }

// Intercept all requests await context.route('**/*', (route) => { const url = route.request().url();

const isHttpScheme = /^https?:/i.test(url); if (!isHttpScheme) { return route.continue(); }

const { hostname } = new URL(url);

if (hostIsAllowed(hostname)) { return route.continue(); }

route.abort(); });

// Modify global vars related to analytics before page scripts run to prevent tracking and console errors await context.addInitScript(() => { (window as any)['ga-disable-all'] = true; (window as any)['ga-disable'] = true; (window as any).lintrk = () => {}; (window as any).clarity = () => {}; (window as any).fbq = () => {}; });}tests/my-playwright-tests.ts

Here we perform two types of tests:

- Ensuring that a GraphQL endpoint returns what we are expecting

- Ensuring that a number of pages contain the components we are expecting

import { test, expect, Page } from '@playwright/test';import { GraphQLClient, gql } from 'graphql-request';

test('SOME_PAGE returns article cards', async ({ page, baseURL }) => { const path = "/SOME_PAGE"; console.log(`Testing article cards on ${baseURL + path}`) await page.goto(baseURL + path, { waitUntil: 'domcontentloaded' });

const cardContainer = await page.locator('div[class*="articleDirectory_resultsContainer__"]'); const ul = cardContainer.locator('ul[class*="cardGrid_cardGrid__"]'); await expect(ul).toHaveCount(1);

const cards = ul.locator('li div[class*="articleCard_card__"]'); const count = await cards.count(); await expect(count).toBeGreaterThanOrEqual(10);});

test('locationPanel appears on office pages', async ({ page, baseURL }: { page: Page, baseURL: string }) => { const endpoint = process.env.GRAPHQL_ENDPOINT; const apiKey = process.env.SITECORE_API_KEY;

if (!endpoint || !apiKey || !baseURL) throw new Error('Missing environment variables');

const client = new GraphQLClient(endpoint, { headers: { sc_apikey: apiKey }, });

const queries = { office: gql` query { item(path: "/sitecore/content/ACME/home/offices", language: "en") { children(first: 3) { results { url { path } } } } } `, render: gql` query GetRendered($path: String!, $language: String!) { item(path: $path, language: $language) { rendered } } `, };

const paths: string[] = [];

for (const key of ['office'] as const) { const data = await client.request(queries[key]);

const validPaths = (data?.item?.children?.results ?? []) .filter((r: any) => r?.url?.path) .map((r: any) => r.url.path);

paths.push(...validPaths); }

const testPaths: string[] = [];

for (const path of paths) { try { const fullPath = '/sitecore/content/ACME/home' + path; const data = await client.request(queries.render, { path: fullPath, language: 'en' }); const renderings = data?.item?.rendered?.sitecore?.route?.placeholders?.['jss-main'] ?? []; if (renderings.some((r: any) => r.componentName === 'locationPanel')) { testPaths.push(path); } } catch (e: any) { console.warn(`Skipping ${path}: ${e.message}`); } }

if (!testPaths.length) throw new Error('No pages found with locationPanel component');

for (const path of testPaths) { await test.step(`Visiting ${path}`, async () => { await page.goto(baseURL + path, { waitUntil: 'domcontentloaded' });

console.log(`Testing locations component on ${baseURL + path}`);

const ourLocationsContainer = await page.locator('section[class*="ourLocations_container__"]'); await expect(ourLocationsContainer).toBeVisible();

const ourLocationsList = ourLocationsContainer.locator('ul[class*="carousel_carousel__"]'); await expect(ourLocationsList).toHaveCount(1);

const ourLocationsCards = ourLocationsList.locator('li div[class*="ourLocations_card__"]'); const ourLocationsCount = await ourLocationsCards.count(); await expect(ourLocationsCount).toBeGreaterThanOrEqual(1); }); }});Once all of the playwright tests are in place and confirmed working via npx playwright test, we can add the GitHub Action workflow.

.github/workflows/run-front-end-tests.yml

These are the instructions for the GitHub actions agent.

In order to start running Actions in the GitHub UI, you need to merge this file into your main branch. Then it will show up in the GitHub UI. Else, you can run this locally on demand.

name: Run Front End Testson: workflow_dispatch: # Allows manual runs from GitHub UI schedule: # Run nightly at 00:00 AM UTC - cron: '0 0 * * *' # repository_dispatch: # Run when a Vercel deployment succeeds # types: [vercel.deployment.success]permissions: contents: read # Ensure caching permissionsjobs: e2e: # if: github.event_name != 'repository_dispatch' || github.event.client_payload.target == 'preview' concurrency: # Automatically cancel any running builds from same ref group: e2e-${{ github.ref }} cancel-in-progress: true runs-on: ubuntu-latest steps: - name: Checkout commit uses: actions/checkout@v4 # Use the exact commit that Vercel built, use current for manual/cron with: ref: ${{ github.event.client_payload.git.sha || github.sha }}

- name: Set up Node.js uses: actions/setup-node@v4 with: node-version: 18 cache: npm cache-dependency-path: package-lock.json

- name: Restore Playwright browsers id: pw-cache uses: actions/cache@v4 with: path: ~/.cache/ms-playwright # Playwright binaries location key: pw-${{ runner.os }}-${{ hashFiles('**/package-lock.json') }}

- name: Install dependencies run: npm ci --no-audit --prefer-offline --progress=false

- name: Install Chromium if Cache Missed if: steps.pw-cache.outputs.cache-hit != 'true' run: npx playwright install chromium --with-deps

- name: Run Playwright tests run: | echo "Running tests against: $TEST_URL" npx playwright test env: # Use the Vercel preview url, use PROD for manual/cron TEST_URL: ${{ github.event.client_payload.url || 'https://www.YOUR_LIVE_SITE.com' }} GRAPHQL_ENDPOINT: ${{ secrets.GRAPHQL_ENDPOINT }} SITECORE_API_KEY: ${{ secrets.SITECORE_API_KEY }}Future Considerations

Once a few basic tests are in place, you can explore other interesting cases such as:

- Does my load if ad blockers are enabled?

- Does my site look fine when browser dark mode is enabled?

Despite those being more advanced test cases, you can take the same approach of determining the simplest way to test them.

Playwright can also be used to take screenshots, which means you can do visual regression testing.

And remember the struggle:

-MG